Your FAQ Page Is Your New Homepage for AI Search

Table of Contents

- Why LLMs Prefer FAQ Pages Over Blog Posts

- The Technical Structure That Gets Cited

- Use Static HTML, Not JavaScript Accordions

- Semantic Heading Hierarchy

- Topic Cluster Organization

- FAQPage Schema Markup

- Machine-Readable Freshness Signals

- Mining the Right Questions

- Google Search Console Query Mining

- ChatGPT and Perplexity Reverse Engineering

- Sales and Support Conversation Mining

- Competitive Gap Analysis

- Answer Architecture for Maximum Citability

- Part 1: Direct Answer (First Sentence)

- Part 2: Context and Specifics (Second Paragraph)

- Part 3: Practitioner Perspective (Third Paragraph)

- Part 4: Connector (Final Sentence)

- Target Length

- SEO-Optimized FAQs vs. GEO-Optimized FAQs

- Update Cadence and Maintenance

- The ROI Math

- What We See Companies Getting Wrong

- The Land Grab

- Related Resources

- Frequently Asked Questions

- What is the difference between an SEO FAQ and a GEO FAQ?

- How many questions should a GEO-optimized FAQ page have?

- Do FAQ pages need to be in static HTML for AI search?

- How often should a GEO FAQ page be updated?

Most B2B SaaS companies treat their FAQ page like a compliance checkbox. A dozen generic questions, two-sentence answers, stuffed inside a collapsible accordion that marketing updates once a year. It sits at the bottom of the sitemap doing nothing.

Meanwhile, that FAQ page is the single highest-ROI asset you can build for generative engine optimization.

One agency recently reported $506K in contract value over four months from LLM-optimized content, with referral traffic from AI models growing 7.6x during that period. The content types driving those numbers were not 3,000-word thought leadership posts or viral LinkedIn threads. They were structured, question-formatted pages that mapped directly to how language models retrieve and cite information.

We build these for clients. The pattern is consistent: a well-structured FAQ page outperforms an entire blog in LLM citation frequency because it is formatted for extraction, not browsing. Here is the technical implementation guide for doing it yourself.

Why LLMs Prefer FAQ Pages Over Blog Posts

To build an effective GEO FAQ, you need to understand what makes content citable by language models.

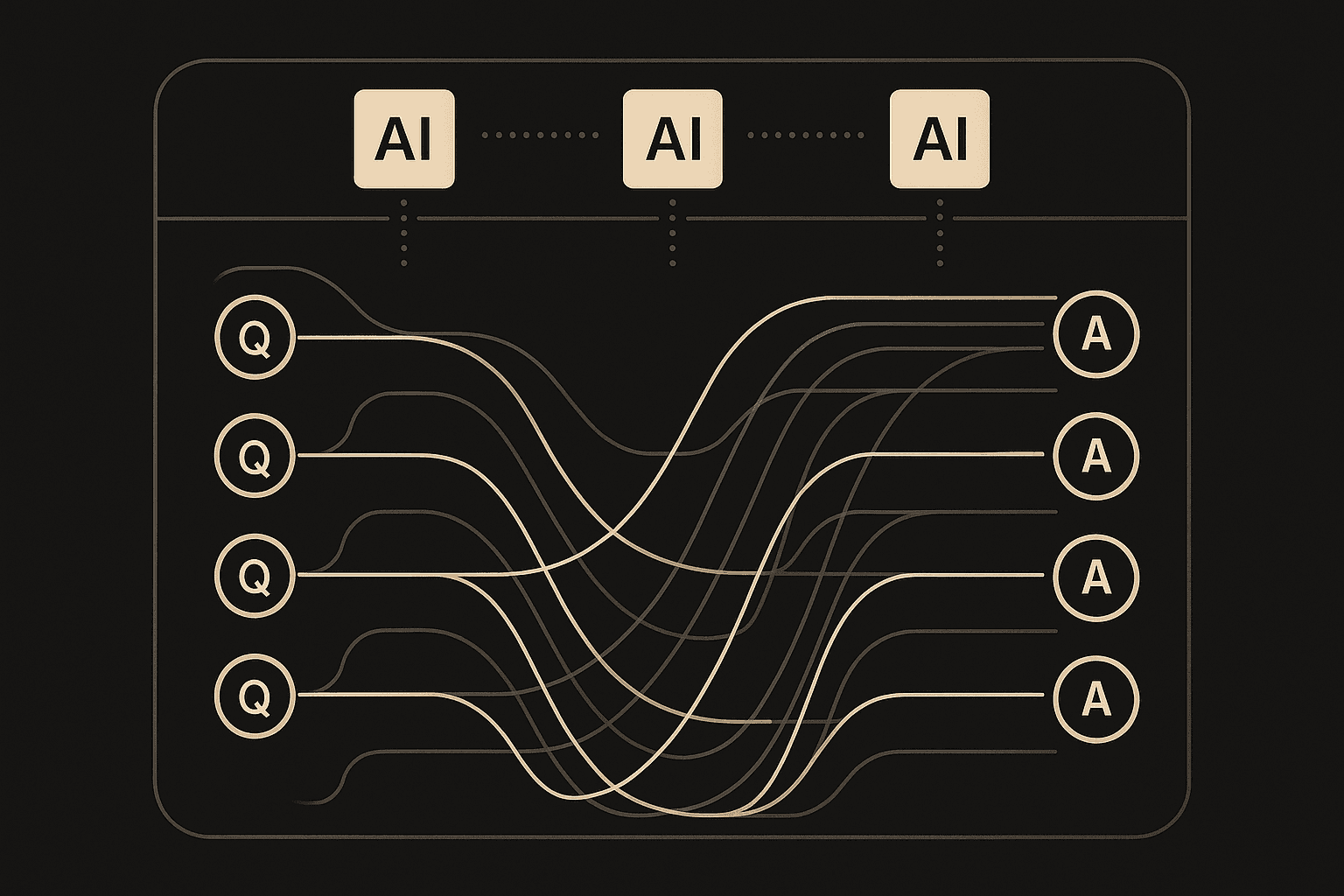

LLMs do not rank pages the way Google does. They do not count backlinks or evaluate keyword density. When a retrieval-augmented model like ChatGPT with browsing or Perplexity answers a query, it fetches web content in real time, evaluates it for relevance and authority, then decides which sources to cite in its synthesized response.

Three properties determine whether your content gets cited.

Structured extractability. LLMs need to identify the question being asked and the answer being given. A paragraph buried in the eighth section of a blog post might contain the right answer, but a cleanly structured Q&A pair with the question as an HTML heading and the answer directly below it is orders of magnitude easier for the model to find, extract, and attribute. The easier you make extraction, the more citations you earn.

Entity precision. Models need to understand what your company does, who you serve, and how you differ from alternatives. Pages that use consistent product names, specific service descriptions, and unambiguous company language give models the semantic scaffolding to recommend you in the right context. Vague copy like “we help companies grow” gives the model nothing to work with.

Topical authority density. A single FAQ page covering 40 to 60 deeply specific questions about your domain creates a dense node of citable content. Each question-answer pair is a potential citation target. Compare that to a blog where the same information is scattered across 30 posts and diluted with narrative filler.

Your FAQ page, built correctly, hits all three simultaneously. It is a structured, entity-rich, topically dense extraction target. That combination is why it outperforms other content types for AI search visibility.

The Technical Structure That Gets Cited

The HTML structure of your FAQ page determines whether LLMs can extract your answers. Get this wrong and nothing else matters.

Use Static HTML, Not JavaScript Accordions

Every question and every answer must be present in the raw HTML when the page loads. No click-to-expand widgets. No JavaScript-rendered content. No dynamically loaded sections.

Most popular CMS FAQ plugins use accordion components that hide answer text behind data-toggle attributes or load content via AJAX. Retrieval systems used by ChatGPT, Perplexity, and Claude parse HTML. If the answer text is not in the initial HTML response, it does not exist to the model.

Server-side rendered or statically generated pages are the standard. If you are running a headless CMS with Next.js or similar, your FAQ content needs to be in the build output, not fetched client-side.

Semantic Heading Hierarchy

Each question should be an <h2> or <h3> element. The answer follows immediately in standard <p> tags. This is the signal structure that retrieval systems use to identify content boundaries.

<h2>How much does enterprise CRM implementation cost?</h2> <p>Enterprise CRM implementation typically costs between $50,000 and $300,000 depending on team size, integration complexity, and customization requirements. Mid-market companies (100-500 employees) most commonly spend $75,000 to $150,000 for a full deployment including data migration and training.</p> <p>The primary cost drivers are...</p>Do not use <div> elements with class names as question containers. Do not use <span> or <strong> tags for questions. Use proper heading elements. LLMs parse semantic HTML structure, and headings are the clearest signal of content hierarchy.

Topic Cluster Organization

Group related questions under topic-level headings. A flat list of 50 unrelated questions is harder for models to navigate than 5 clusters of 10 related questions.

<h2>Pricing and Engagement Models</h2>

<h3>How much does a typical SEO engagement cost for a Series A SaaS company?</h3>

<p>...</p>

<h3>What is included in a monthly SEO retainer versus a one-time audit?</h3>

<p>...</p>

<h2>Technical Implementation</h2>

<h3>How do you handle CMS migrations during an SEO engagement?</h3>

<p>...</p>

This cluster structure creates clear semantic sections. When a model processes your page, it can identify which topic cluster a question belongs to and evaluate your authority within that specific subtopic.

FAQPage Schema Markup

Add JSON-LD structured data using the FAQPage schema type. This is not the primary driver for LLM citation (the HTML structure is), but it provides an additional extraction signal and earns you Google’s FAQ rich results as a bonus.

{ "@context": "https://schema.org", "@type": "FAQPage", "mainEntity": [ { "@type": "Question", "name": "How much does enterprise CRM implementation cost?", "acceptedAnswer": { "@type": "Answer", "text": "Enterprise CRM implementation typically costs between $50,000 and $300,000 depending on team size, integration complexity, and customization requirements." } } ] }Place the JSON-LD in a <script type="application/ld+json"> tag in the page <head> or at the end of the <body>. Include every question-answer pair on the page. Keep the text field in each Answer concise (the first 1-2 sentences of your full answer). The expanded answer lives in the HTML body where models extract it directly.

Machine-Readable Freshness Signals

Add a visible last-updated date on the page in a machine-readable format.

<p>Last updated: <time datetime="2026-04-07">April 7, 2026</time></p>Retrieval-augmented models weight freshness. A FAQ page updated this month will outperform an identical page last updated six months ago. The <time> element with a datetime attribute is the cleanest signal.

Mining the Right Questions

The questions on your FAQ page determine its citation potential. Generic questions like “Why choose us?” or “What makes us different?” are vanity questions that LLM users never ask. You need the actual queries your buyers type into ChatGPT and Perplexity.

Google Search Console Query Mining

Your GSC data contains the exact queries people use to find content in your category. Filter for question-format queries (starting with “how,” “what,” “why,” “when,” “which,” “can,” “does,” “should”) and sort by impressions.

In GSC, navigate to Performance, then filter by Query containing each question word. Export the results. You are looking for patterns: clusters of related questions that indicate buyer research behavior.

For a B2B SaaS company selling a data platform, you might find:

- “how to migrate from legacy data warehouse”

- “what is the cost of data platform implementation”

- “how long does data migration take for enterprise”

- “what integrations does a modern data platform need”

These are real buyer queries. Each one becomes an FAQ entry.

ChatGPT and Perplexity Reverse Engineering

Go to ChatGPT and Perplexity. Ask questions about your product category as if you were a buyer evaluating solutions. Look at three things in the responses:

- What sources get cited? Note the URL structure and content format of cited pages.

- What follow-up questions does the model suggest? These are queries your FAQ should answer.

- What format does the model use in its response? Match that format in your answers.

Run 20 to 30 queries across both platforms. Log which of your competitors get cited and examine their content structure. The patterns you find directly inform how to structure your own answers for maximum citability.

Sales and Support Conversation Mining

Your sales team and support inbox contain the questions buyers actually ask before purchasing. Pull the last 90 days of sales call transcripts, demo request forms, support tickets, and live chat logs. Categorize by theme and frequency.

The questions buyers ask your sales team are the same questions they ask ChatGPT. The difference is that ChatGPT answers 24 hours a day with whatever sources it finds. If your FAQ does not contain the answer, a competitor’s page will be cited instead.

Competitive Gap Analysis

Identify which questions your competitors answer that you do not. Crawl their FAQ pages, knowledge bases, and blog posts. For each question they cover, evaluate whether your answer would be more specific, more authoritative, or more current.

The goal is not to copy their questions verbatim. The goal is to ensure your FAQ covers the same buyer decision points with better, more specific, more entity-rich answers.

Answer Architecture for Maximum Citability

Every FAQ answer should follow a four-part structure optimized for LLM extraction.

Part 1: Direct Answer (First Sentence)

The opening sentence must be a complete, standalone answer to the question. This is the sentence the LLM will most likely extract as the core of its response.

Good: “The average cost of implementing a B2B SaaS CRM ranges from $15,000 to $150,000 depending on team size, existing tech stack complexity, and customization requirements.”

Bad: “The cost of CRM implementation depends on many factors that we’ll explore in this answer.”

The first version is immediately citable. The second is useless to a model that needs to synthesize a response.

Part 2: Context and Specifics (Second Paragraph)

Expand with numbers, timeframes, conditions, and scenarios that affect the answer. This is where you demonstrate expertise.

“Mid-market teams (50-200 employees) typically spend $40,000 to $80,000 on initial implementation over 8-12 weeks. Enterprise deployments (500+ employees) run $100,000 to $300,000 over 3-6 months due to data migration complexity and custom integration requirements. The largest cost variable is usually the number of existing tools that need bidirectional sync.”

Specific data points signal authority to the model. Every number, every timeframe, every concrete detail makes your answer more citable than a vague alternative.

Part 3: Practitioner Perspective (Third Paragraph)

Naturally weave in your company’s approach. Not a sales pitch. A practitioner’s answer that references your methodology because it is relevant.

“At SearchLever, we typically see the biggest implementation cost savings come from teams that document their existing workflow before choosing a CRM. Our diagnostic process maps data flows across sales, marketing, and CS before recommending an architecture. Teams that skip this step overspend by 30-40% on integrations they did not need.”

This positions your brand as the expert source while keeping the answer genuinely useful. The LLM cites your page because the answer is authoritative, and your brand gets embedded in the citation.

Part 4: Connector (Final Sentence)

Link to a related question or deeper resource on your site. This serves two purposes: it builds internal link equity for SEO, and it creates semantic connections that help models understand your content graph.

“For a breakdown of how CRM implementation timelines shift based on data migration complexity, see our answer on data migration planning below.”

Target Length

Each answer should run 150 to 300 words. Shorter and you lack the depth for citation. Longer and you dilute the core answer with filler.

SEO-Optimized FAQs vs. GEO-Optimized FAQs

If you already have a FAQ page built for traditional SEO, you cannot just add schema markup and call it GEO-ready. The optimization targets are fundamentally different.

Answer completeness. SEO FAQs often give partial answers to drive click-through. GEO FAQs give complete, self-contained answers because the LLM extracts and presents the answer directly. If your answer is incomplete, the model finds a competitor’s complete answer and cites that instead.

Question formatting. SEO FAQs target keyword density. GEO FAQs target natural language query matching. People ask ChatGPT full questions: “What is the best CRM for a 20-person B2B SaaS company?” They type keywords into Google: “best CRM B2B SaaS.” Your FAQ questions need to match the conversational format.

Answer depth. SEO FAQs favor brevity for featured snippets. GEO FAQs benefit from 150 to 300 word answers because language models have the context window to process longer responses and prefer answers that demonstrate expertise through detail.

Update frequency. SEO FAQs can sit static for months. GEO FAQs need monthly updates because retrieval-augmented models weight freshness. A FAQ last updated six months ago loses ground to a competitor that updates monthly.

Internal linking purpose. SEO FAQ links distribute link equity. GEO FAQ links build semantic connections that help models understand how your content topics relate to each other. Both matter, but the GEO value is in the entity graph, not the PageRank flow.

A good SEO FAQ can be a bad GEO FAQ. If you are starting from an existing page, plan to rebuild the answer architecture, not just layer schema on top.

Update Cadence and Maintenance

A GEO FAQ is not a build-once asset. The maintenance cadence directly affects citation longevity.

Monthly: question audit. Pull fresh GSC query data. Run 10 to 15 new queries in ChatGPT and Perplexity targeting your category. Identify new questions your FAQ does not yet answer. Add 3 to 5 new Q&A pairs per month.

Monthly: answer refresh. Review existing answers for outdated statistics, pricing, or tool references. Update any answer that references data more than 6 months old. Update the page timestamp every time you make substantive changes.

Quarterly: depth expansion. Select the 5 to 10 highest-value questions (based on GSC impressions and LLM citation frequency) and expand their answers with new data, updated benchmarks, or additional practitioner context.

Quarterly: competitive monitoring. Run your target queries in all major LLMs and track which competitors get cited. Read their cited content. Write more specific, more authoritative, more current answers.

The compounding dynamic here is real. Each update signals freshness to retrieval systems. Each new question expands your citation surface area. Each deepened answer strengthens your authority signal. Teams that maintain this cadence build an accelerating advantage over teams that build once and walk away.

The ROI Math

Consider the numbers. A well-built GEO FAQ page takes 3 to 5 days of focused work to create (query mining, answer writing, technical implementation, schema markup). Ongoing maintenance is 4 to 6 hours per month.

Compare that to a traditional SEO content program: weekly blog posts, link building campaigns, technical audits, content refreshes. That is 40 to 80 hours per month of sustained effort.

One agency saw a 7.6x increase in LLM referral traffic over four months after restructuring content into question-formatted pages. They attributed $506K in pipeline value to this channel in the same period.

A single FAQ page can outperform a 50-post blog in LLM citation frequency because the FAQ is formatted for extraction while the blog is formatted for reading. The ROI per hour of effort is not comparable. The FAQ wins by an order of magnitude.

We are not saying kill your blog. Your blog serves other purposes: thought leadership, long-tail organic traffic, social distribution. But if you are investing 40 hours per month in content and zero of those hours go toward your FAQ page as a GEO asset, you are leaving the highest-leverage play on the table.

What We See Companies Getting Wrong

The most common mistake is treating GEO as a cosmetic layer on existing SEO. Teams add FAQ schema to their current page, leave the two-sentence answers untouched behind JavaScript accordions, and wait for results. Nothing happens because nothing changed at the structural level.

The second mistake is writing FAQ answers in marketing-speak. “We provide industry-leading solutions for modern businesses” contains zero extractable information. LLMs cannot cite vagueness. Every answer needs specific numbers, concrete processes, and real expertise.

The third mistake is building the FAQ and never touching it again. Retrieval-augmented models fetch live content. A stale FAQ loses ground every month to competitors who refresh theirs. Freshness compounds. So does staleness.

The fourth mistake is targeting the wrong questions. Your marketing team’s wish-list questions (“Why should I choose us?”) are not the questions buyers ask language models. Buyers ask comparison questions, cost questions, implementation questions, and risk questions. Mine the real queries from GSC, sales conversations, and competitive analysis.

The Land Grab

The FAQ page is the cheapest GEO real estate you can claim right now. Here is why.

LLMs are still forming their source preferences. The models are learning, in real time, which domains provide reliable, structured, specific answers to industry questions. The companies that establish themselves as authoritative FAQ sources during this formative period will have a compounding advantage as models refine their citation behavior.

This mirrors the early SEO era. Companies that invested in search visibility between 2005 and 2010 built moats that lasted a decade. The companies that waited until 2015 found the cost of competing had multiplied tenfold.

The AI search version of this land grab is happening now. Most B2B SaaS companies have not rebuilt their FAQ architecture for LLM citation. The competition for structured Q&A citations is thin. That window will close as more teams recognize the opportunity, but today the cost of entry is a few days of focused work and a few hours of monthly maintenance.

At SearchLever, we see GEO as a standalone channel with its own strategy, measurement, and optimization loops. The FAQ page is not the entire GEO strategy. But it is the highest-ROI starting point, the easiest to build, and the fastest to show results.

Build your FAQ page like your pipeline depends on it. The early data says it does.

Related Resources

If you are building a GEO strategy alongside traditional SEO, these guides cover the broader context:

- GEO vs AEO vs SEO: What B2B SaaS Companies Need to Know About AI Search covers the strategic differences between these three optimization approaches.

- Content Marketing for SaaS: From Strategy to Pipeline Attribution provides the content framework that your FAQ page should integrate with.

- SaaS SEO: The Complete Guide to Organic Growth for B2B SaaS covers the full organic strategy, including how GEO fits alongside traditional SEO.

- AI Search Results: What the Early Data Tells Us analyzes the industry data behind AI search as a revenue channel.

Frequently Asked Questions

What is the difference between an SEO FAQ and a GEO FAQ?

An SEO FAQ targets long-tail keywords with brief answers designed to win featured snippets on Google. A GEO FAQ uses natural language questions that real buyers ask AI assistants, with 150-300 word answers structured for LLM extraction and citation. SEO FAQs optimize for click-through. GEO FAQs optimize for complete, self-contained answers because the LLM will present the answer directly.

How many questions should a GEO-optimized FAQ page have?

A GEO-optimized FAQ page should have 40-60 deeply specific questions organized into 5-8 topic clusters. Generic FAQ pages with 8-12 questions do not provide enough depth for LLMs to recognize topical authority. Each question should map to a natural language query that your target audience actually asks AI assistants like ChatGPT or Perplexity.

Do FAQ pages need to be in static HTML for AI search?

Yes. JavaScript-rendered FAQ accordions and collapsible widgets often fail to get indexed by LLM retrieval systems. Every question and answer must be present in the raw HTML when the page loads, using semantic H2 or H3 headings for questions and standard paragraph tags for answers. Server-side rendering or static site generation is required.

How often should a GEO FAQ page be updated?

Monthly at minimum. Run a question audit each month using ChatGPT query testing, Google Search Console data, and sales call transcripts to identify new questions. Update existing answers with fresher data quarterly. Retrieval-augmented LLMs weight freshness, so a FAQ page updated last month will outperform one unchanged for six months.

GTM & Growth Engineering

13+ years building revenue systems across B2B SaaS, fintech, and global operations. Previously at IBM, WorldRemit, Uber, and Janus Henderson. Clay Product Expert. Builds the GTM infrastructure and software layer that ties organic to pipeline.

SEO & Content Engineering

12+ years in technical SEO, currently SEO Manager EMEA at GoDaddy. Previously led SEO for Hawkers Group, Europe Assistance, Klorane, and Puressentiel. Founded Pixel News. Botify Pro certified. Specializes in site architecture, crawl optimization, and international SEO across 5 languages.